Politics

Politics  Politics

Politics  Weird Stuff

Weird Stuff 10 Eggs-traordinarily Odd Eggs

History

History 10 Desperate Last Stands That Ended in Victory

Animals

Animals Ten Times It Rained Animals (Yes, Animals)

Mysteries

Mysteries 10 Devastating Missing Child Cases That Remain Unsolved

Creepy

Creepy 10 Scary Tales from the Middle Ages That’ll Keep You up at Night

Humans

Humans 10 One-of-a-kind People the World Said Goodbye to in July 2024

Movies and TV

Movies and TV 10 Holiday Movies Released at Odd Times of the Year

Politics

Politics 10 Countries Where Religion and Politics Are Inseparable

Weird Stuff

Weird Stuff 10 Freaky Times When Famous Body Parts Were Stolen

Politics

Politics The 10 Most Bizarre Presidential Elections in Human History

Weird Stuff

Weird Stuff 10 Eggs-traordinarily Odd Eggs

History

History 10 Desperate Last Stands That Ended in Victory

Who's Behind Listverse?

Jamie Frater

Head Editor

Jamie founded Listverse due to an insatiable desire to share fascinating, obscure, and bizarre facts. He has been a guest speaker on numerous national radio and television stations and is a five time published author.

More About Us Animals

Animals Ten Times It Rained Animals (Yes, Animals)

Mysteries

Mysteries 10 Devastating Missing Child Cases That Remain Unsolved

Creepy

Creepy 10 Scary Tales from the Middle Ages That’ll Keep You up at Night

Humans

Humans 10 One-of-a-kind People the World Said Goodbye to in July 2024

Movies and TV

Movies and TV 10 Holiday Movies Released at Odd Times of the Year

Politics

Politics 10 Countries Where Religion and Politics Are Inseparable

Weird Stuff

Weird Stuff 10 Freaky Times When Famous Body Parts Were Stolen

10 Seriously Epic Computer Software Bugs

The majority of software bugs are small inconveniences that can be overcome or worked around by the user – but there are some notable cases where a simple mistake has affected millions, to one degree or another, and even caused injury and loss of life.

Software is written by humans – and every piece of software therefore has bugs, or “undocumented features” as a salesman might call them. That is, the software does something that it shouldn’t, or doesn’t do something that it should. These bugs can be due to bad design, misunderstanding of a problem, or just simple human error – just like a typo in a book. However, whereas a book is read by a human who can usually infer the meaning of a misspelled word, software is read by computers, which are comparatively stupid, and will do only what they’re told.

Here are ten cases where the consequences of these bugs were enormous, in some way or another:

The Therac-25 was a machine for administering radiation therapy, generally for treating cancer patients. It had two modes of operation. The first consisted of an electron beam targeted directly at the patient in small doses for a short amount of time. The second aimed the electron beam at high energy levels at a metal ‘target’ first, which would essentially convert the beam into X-rays that were then passed into the patient.

In previous models of the Therac machine, for this second mode of operation, there were physical fail-safes to ensure that this target was in place as, without it, very high energy beams could be mistakenly fired directly into the patient. In the new model, these physical fail-safes were replaced by software ones.

Unfortunately, there was a bug in the software: an ‘arithmetic overflow’ sometimes occurred during automatic safety checks. This basically means that the system was using a number inside its internal calculations that was too big for it to handle. If, at this precise moment, the operator was configuring the machine, the safety checks would fail and the metal target would not be moved into place. The result was that beams 100 times higher than the intended dose would be fired into a patient, giving them radiation poisoning. This happened on 6 known occasions, causing the later death of 4 patients.

The hugely successful World of Warcraft (WoW), an online computer game created by Blizzard Entertainment, suffered an embarrassing glitch following an update to their game on September 13, 2005 – causing mass (fictional) death. Following an update to the game content, a new enemy character, Hakkar, was introduced who had the ability to inflict a disease, called Corrupted Blood, upon the playing characters that would drain their health over a period of time. This disease could be passed from player to player, just as in the real world, and had the potential to kill any character contracting it. This effect was meant to be strictly localised to the area of the game that Hakkar inhabited.

However, one thing was overlooked: players were able to teleport to other areas of the game while still infected and pass the disease onto others – which is exactly what happened. I can’t find any figures on the body count, but entire cities within the game world were no-go areas, with dead player’s corpses littering the streets. Fortunately, player death is not permanent in WoW and the event was soon over when the administrators of the game reset the servers and applied further software updates. Particularly interesting is the way players reactions in the game could closely reflect their reactions to a similar real-life incident.

Affecting around 55 million people, mainly in the North Eastern United States, but also Ontario Canada, this was one of the biggest power blackouts in history. It started when a power plant along the southern shore of Lake Erie, Ohio went offline due to high demand which put the rest of the power network under greater stress. When power lines are under heavier electrical load, they heat up, meaning the material making up the cable (usually aluminum and steel) expands. Several power lines hung lower as they expanded and caught trees, bringing them down and putting the system under yet more pressure. This led to a cascading effect that eventually reduced the power network to 20% of normal output.

While the causes of this blackout were nothing to do with a software bug, it could have been averted were it not for a software bug in the control centre alarm system. In what is called a ‘race condition’ scenario, two parts of the system were competing over the same resource and were unable to resolve the conflict, which caused the alarm system to freeze and stop processing alerts. Unfortunately, the alarm system failed ‘silently’, meaning it broke, but didn’t notify anybody that it had broken. This meant no audio or visual alerts were provided to control room staff, who over relied on such things for situational awareness. The aftermath was well reported and left many areas without power for several days and affected industry, utilities, communication. It was also blamed as at least a contributing factor in several deaths.

In the world of software development, there are several commonly known bugs that programmers encounter and have to cater for. One such example is the ‘divide by zero’ bug, where a calculation is performed that divides any number by zero. Such a calculation isn’t possible to resolve, at least not without using higher mathematics, and most software – for everything from super computers to pocket calculators – is written to take this scenario into account.

It was with some embarrassment, then, that the USS Yorktown suffered a complete failure of its propulsion system and was dead in the water for nearly 3 hours when a crew member typed a “0” into the on-board database management system which was then used in a division calculation. The software was installed as part of a wider operation to use computers to reduce the man power needed to run some ships. Fortunately, the ship was engaged in maneuvers at the time of the incident, rather than deployed in a combat environment, which could have had more severe consequences.

This one is a bit of a stretch, and may never have in fact happened, but – if it is true – it is a prominent example of a deliberately introduced software bug causing a big incident.

During the Cold War, when relations between the US and Soviet Russia were a tad frosty, the Central Intelligence Agency are said to have deliberately placed bugs inside software being sold by a Canadian company -software that was used for controlling the trans-siberian gas pipeline. It was thought by the CIA that Russia was purchasing this system via a Canadian company as a means of covertly obtaining US technology, and that this would be an opportunity to feed them defective material.

Such practices were later referenced in the declassified “Farewell Dossier” where, amongst other things, it is alleged that faulty turbines were in fact used on a gas pipeline. It is claimed by former Secretary of the Air force, Thomas C. Reed, that a series of bugs were introduced so that the system would pass tests but break during actual use. Settings for pumps and valves were set to exceed the pressures that the pipeline could withstand, which led to an explosion said to be the largest non-nuclear explosion ever recorded.

These claims, however, have been contradicted by KGB veteran, Anatoly Medetsky, who claims that the explosion was caused by sub-par construction rather than deliberate sabotage. Whatever the cause, no known casualties were reported as the explosion occurred in a very remote area.

Stanislav Petrov was the duty officer of a secret bunker near Moscow responsible for monitoring the Soviet early warning satellite system. Just after midnight, they received an alert that the US had launched five Minuteman intercontinental ballistic missiles. As part of the mutually assured destruction doctrine that came into prevalence during the Cold War, the response to an attack by one power would be a revenge attack by the other.

This meant that if the attack was genuine, they needed to respond quickly. However, it seemed strange that the US would attack with just a handful of warheads: although they would cause massive damage and loss of life, it wouldn’t be even nearly sufficient to wipe out the Soviet opposition. Also, the radar stations on the ground weren’t picking up any contacts, although these couldn’t detect beyond the horizon because of the curvature of Earth, which could have explained the delay.

Another consideration was the early warning system itself, which was known to have flaws and had been rushed into service in the first place. Petrov weighed all these factors and decided to rule the alert as a false alarm. Although Petrov didn’t have his finger on the nuke button as such, had he passed on a recommendation to his superiors that they take the attack as real, it could have led to all-out nuclear war. Whether based on experience, intuition, or just luck, Petrov’s decision was the right one.

It was later determined that the early detection software had picked up the sun’s reflection from the top of clouds and misinterpreted it as missile launches.

The seemingly never-ending war between media and pirates ebbs and flows every year. As soon as new ways of protecting and securely distributing media is found, new ways of circumventing and compromising these measures are uncovered.

Some would argue that Sony BGM went a step too far in 2005, when they introduced a new form of copy protection on some of their audio CDs. When played using a Windows computer, these CDs would install a piece of software called a ‘rootkit’. A rootkit is a form of software that buries its way deep into a computer and alters certain fundamental processes. Though not always malicious in nature, a rootkit is often used to stealthily plant malicious and hard to detect (or remove) software, such as viruses, trojans etc. In the case of Sony BMG, the aim was to control the way a Windows computer used the Sony CDs to prevent copying them or converting them to MP3s, which would help them cut down on piracy of their media.

The rootkit achieved this – but by taking measures to hide itself from the user, it enabled viruses and other malicious software to hide along with it. The poorly thought-out implementation, and a growing perception that Sony BMG had no business sneakily manipulating users PCs, meant that the whole scheme backfired. It resulted in the rootkit being classified as malware by many computer security companies, as well as several law suits and a product recall of the offending CDs.

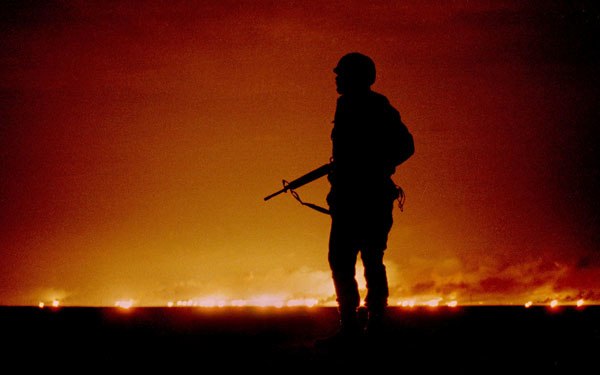

During Operation Desert Shield, the US military deployed the Patriot Missile System as a defense against aircraft and missiles – in this case Iraqi Al Hussein (SCUD) missiles. The tracking software for the Patriot missile uses the velocity of its target and the current time to predict where the target will be from one instant to another. Since various targets may travel at speeds of up to MACH 5, these calculations need to be very accurate.

At the time, there was a bug in the targeting software – which meant that over time, the internal clock would ‘drift’ (much like any clock) further and further from accurate time the longer the system was left running. The bug was actually already known about and was simply fixed by regularly rebooting the system, and thereby resetting the system clock.

Unfortunately, those in charge didn’t clearly understand how ‘regularly’ they should reboot the system, and it was left running for 100 hours. When an Iraqi missile was launched, targeting a US airfield in Dhahran, Saudi Arabia, it was detected by the Patriot missile system. However, by this point, the internal clock had drifted out by 0.34 of a second, so when it tried to calculate where the missile would be next, it was looking at an area of the sky over half a kilometer away from missiles true location. It promptly assumed there was no enemy missile after all and cancelled the interception. The missile carried on to its destination where it killed 28 soldiers and injured a further 98.

The Millennium Bug, or Y2K, is the best known bug on this list and the one that many of us remember hearing about at the time. Basically, this bug was the a result of the combined short-sightedness of computer professionals in the decades leading up to the year 2000. In many computer systems, two digits were used to show the date, e.g. 98 instead of 1998, a practice that seemed reasonable and which pre-dated computers by some time.

Many didn’t anticipate, however, that there may be a problem when the date went past the year 2000. Using current systems, the year 2000 could only be represented as ’00’, which might confuse computers into thinking it meant the year 1900. Such a thing would break any calculations involving ranges of years that crossed the millennium. For example, it might show somebody born in 1920 and dying in 2001 as being minus 19 years old.

In response to the problem, software companies rapidly updated their products, which already controlled just about everything from banking and payrolls to hospital computers and train ticket systems. Also, in recognition of its worldwide nature, the International Y2K Cooperation Centre was created in February 1999 to help coordinate the work required to prepare for the new millennium between governments and organisations, where needed. In the end, the New Year passed without too much incident, besides the universal mother-of-all-hangovers.

It’s hard to say how much of this success was a result of the work carried out to alleviate the problem, or whether the problem had been exaggerated in the media in the first place – probably a mix of both.

Although Y2K is passed, we’re not out of the woods just yet. Not all computers handle dates in the same way, and many computers based on the UNIX operating system handle dates by counting how many seconds a date is since 01/01/1970. For example, the date 01/01/1980 is 315,532,800 seconds after 01/01/1970. This number is stored on these computers as a “signed 32-bit integer”, which has a size limit of 2147483647. That basically means it can only handle dates that are up to 2147483647 seconds after 01/01/1970 – which only takes us up to the 19th of January 2038, after which, we may have problems again.

This is especially true when we consider that UNIX-based software is more commonly used in “embedded systems” rather than a home PC – that is, systems that have a very specific purpose closely related to their hardware, such as software for robotic assembly lines, digital clocks, network routers, security systems and so on.

Also, somebody is going to have to consider what we’re going to do on the 1st of January 10000. Not me though.